01/27/2021

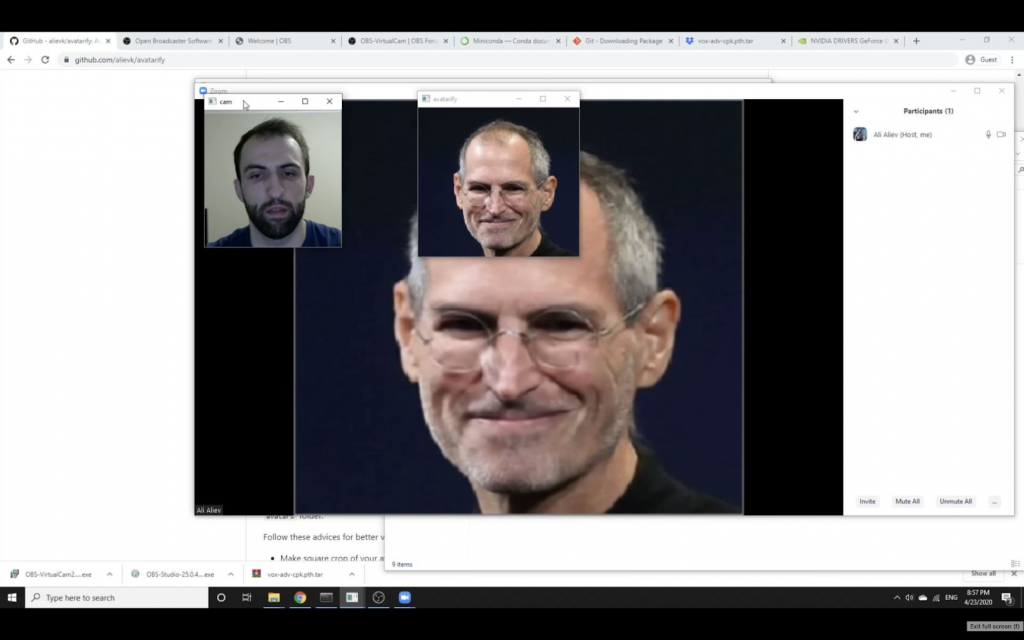

Gemini analysts have observed an increasing number of posts on dark web forums about bypassing sites’ identity verification with face-change technology that uses selfies or videos. Until recently, actors have been using less advanced software, such as the Face Swap feature in Adobe Photoshop, that have been in use for a long time. Now, however, threat actors have begun turning to more powerful software, such as DeepFaceLab and Avatarify. These tools leverage advancements in machine learning, neural networks, and artificial intelligence (AI) to create “deepfake” counterfeits. Deepfakes are images or videos in which the content has been manipulated so that an individual’s appearance or voice looks or sounds like that of someone else. At the current moment, widely available deepfake detection technology lags behind deepfake creation technology; counterfeits can only be detected after careful analysis using specialized AI, which has a 65% detection rate.

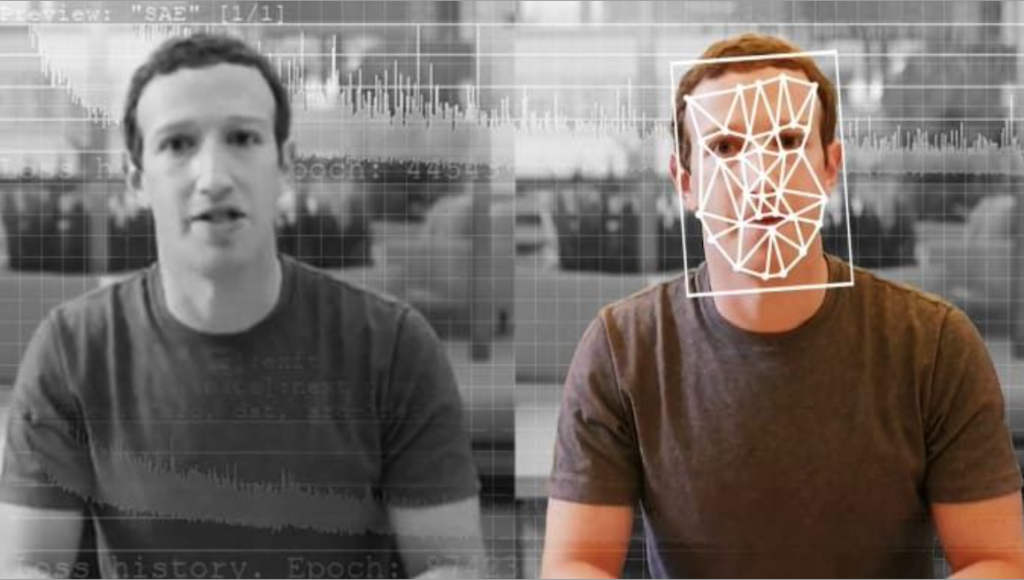

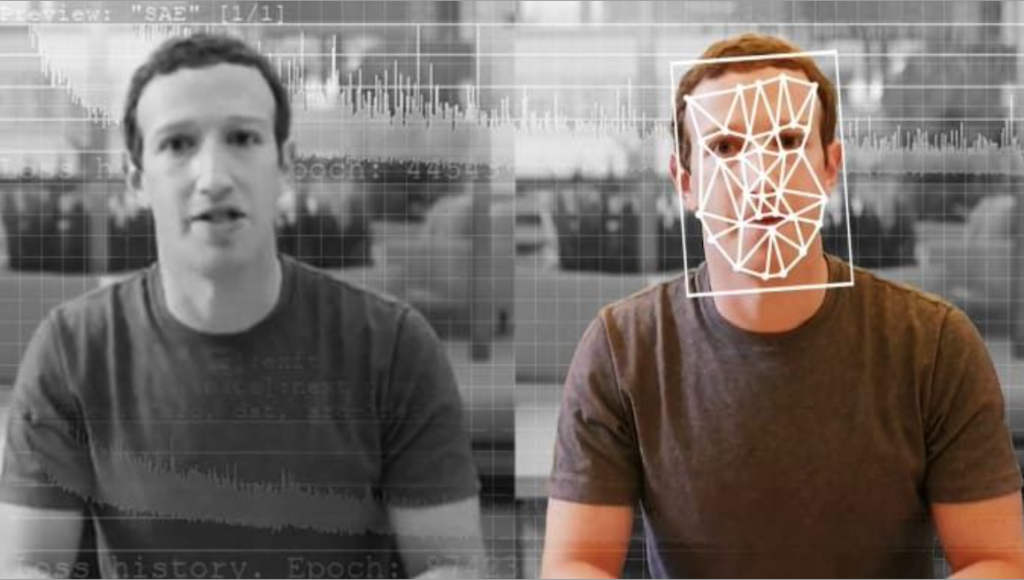

Video: Demonstration of deepfake technology and implications for malicious use (full video via NOVA PBS Official; https://www.youtube.com/watch?v=T76bK2t2r8g).

Virtual Verification

All regulated financial companies operating in the United States are required to identify users on their platforms in order to comply with Know Your Customer (KYC) and anti-money laundering (AML) laws. The EU has similar laws, such as AML4, and other countries implement their own versions of these laws. Traditionally, companies have relied on reputable third-party services, such as credit bureaus, knowledge-based authentication (KBA) or other methods, to identify users. Recently, however, many companies, from banks to cryptocurrency exchanges, are turning to image and video-based verification. Most of these companies require users to upload an official ID, a selfie, or a specifically constructed selfie based on instructions such as holding up fingers or holding a note. Some companies have gone as far as requiring a live video feed in which the user must perform specific gestures and movements.

In addition to ID verification, there is now a new market emerging for video verification services. The ID verification giant ID.me, which provides virtual ID verification services to various entities including government agencies, already has an established video verification service, while other verification companies, such as ondato.com, jumio.com, basisid.com, and fully-verified.com, are starting to offer their own video verification services. According to the ID.me, these services can be used to verify the identity of individuals who have frozen or limited credit lines, outdated information stored in the credit bureaus, unregistered prepaid phones, or do not have a permanent address. However, with deepfake technology, cybercriminals could exploit any of these use cases to imitate victims and gain access to previously inaccessible or protected accounts.

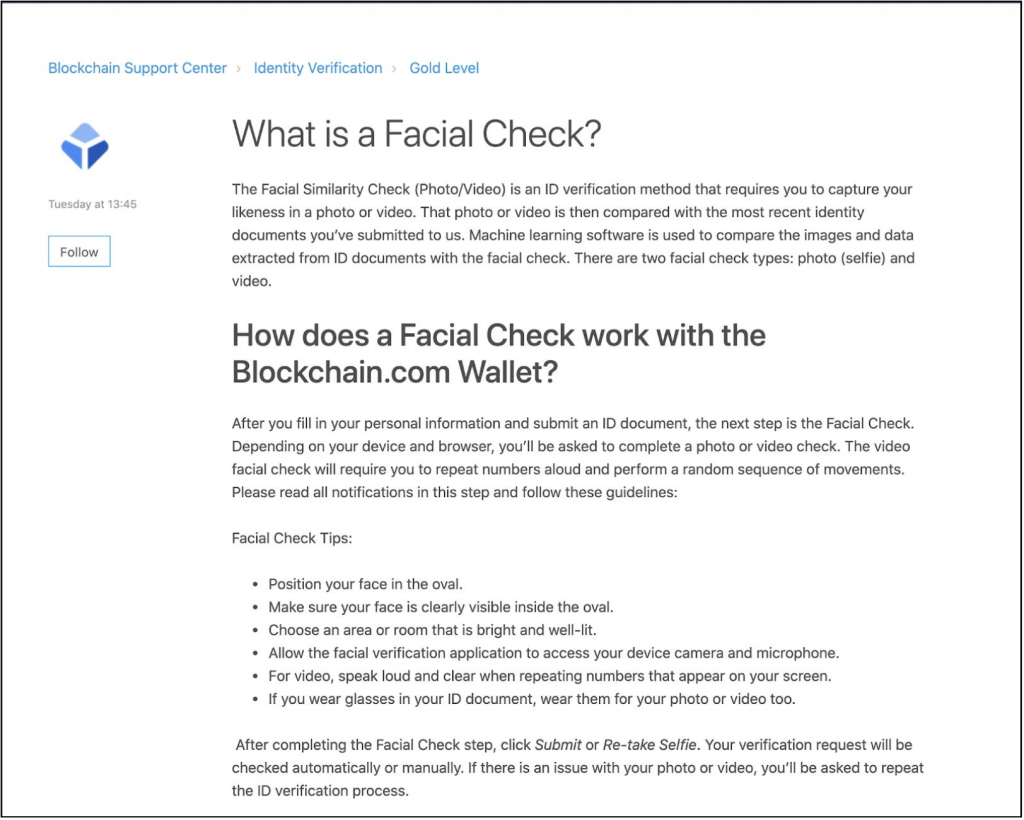

Aside from third-party verification services, some companies have implemented their own video verification process: cryptocurrency exchanges Blockchain and Binance, for example, require users applying for accounts with higher transaction limits to upload an official ID and take a selfie. The exchanges then verify the selfie against the picture on the official ID to confirm the user’s identity. The online bank N26, however, requires its members to confirm their identities via video verification. According to the Verge, it also appears that Amazon has started a new video verification process for new sellers, which could expand to existing sellers in the near future.

Forum Activity

Over the past year, cybercriminals on dark web forums have begun discussing ways to use deepfake technology to bypass the ID verification and video verification systems of banks, cryptocurrency exchanges, and other institutions. The majority of posts have involved an actor either offering their deepfake creation services to other actors or including deepfake instructions as part of tutorials for bypassing specific institutions’ security measures. Actors providing deepfake creation services typically request between $10 and $30 per minute of video, though some actors quote prices in the hundreds, and require several days to create a full video. For tutorials, actors typically provide instructions for how to gain access to compromised accounts on banking or cryptocurrency websites and include links and instructions for deepfake software to deceive verification checks. More broadly, fraudsters on dark web carding forums increasingly indicate they possess the technical ability to create deepfakes but wish to collaborate with other actors to find ways to incorporate deepfakes into new fraud schemes.

However, at the current moment, actors on dark web forums far more commonly discuss using deepfake technology to create recreational or pornographic content than they do for leveraging it for bank fraud. The more common uses, combined with rising interest in applying deepfake to fraud schemes, could spur the development of more robust deepfake technology, and potentially drive down the price of deepfake services, making them more accessible for the average fraudster.

Examples of Software

Multiple actors in the dark web recommended using DeepFaceLab or Avatarify, likely due to the fact that both are free, open-source software that do not require advanced technical knowledge. Previous patterns indicate that these free, open-source, and easy-to-use applications are the most commonly used by cybercriminals for the creation of deceptive content.

DeepFaceLab is one of the most popular deepfake applications and uses artificial neural networks to learn visual and auditory characteristics from an authentic source video, which are then applied to a target video to replace the faces and voices. The software’s source code is hosted on GitHub and was uploaded by the user “iperov.” According to iperov, over 95% of all deepfake videos are created using DeepFaceLab.

Similar to DeepFaceLab, Avatarify is a free software that creates deepfakes by using artificial neural networks for learning and replacement of faces in videos. The source code of the program is also posted on GitHub and was uploaded by its author “alievk.”

Deepfake Detection

The recent rise in more advanced deepfake technology has driven a corresponding increase in the demand for technologies capable of detecting deepfakes. Microsoft announced on September 1, 2020, the release of Microsoft Video Authenticator, a tool that detects “the blending boundary of the deepfake and subtle fading or greyscale elements” to provide a confidence rating of whether the image has been manipulated. While similar technologies have only achieved a precision rate of 65.18% when attempting to identify deepfakes in a simulated real-world setting, the high demand for this technology in spheres outside cybercrime prevention, such as combating disinformation, indicates that more accurate detection tools are likely to be developed.

The emergence of free, powerful, and easy-to-use deepfake applications has given rise to a new, viable attack vector. Cybercriminals have already begun to leverage these applications to bypass image and video-based verification, which, until recently, had proven to be one of the most secure methods of identity verification. While currently only a few actors offer deepfake services and often charge highly for it, high image and video-based verification adoption rates among banking institutions will likely correlate to increased criminal demand for deepfakes services. Increased criminal demand combined with high recreational usage and technological advancements driven by the entertainment industry’s adult content could then result in more deepfake service providers and an eventual decline in the cost of these services. Therefore, as more companies turn to virtual identity verification, Gemini analysts assess with a moderate level of confidence that cybercriminals will likely begin to use deepfakes on a larger scale to deceive and bypass facial recognition checks.

Furthermore, Gemini assesses with moderate confidence that real-time video streaming identification using time and place stamps and augmented by the use of artificial intelligence programs to detect signs of human liveliness, lighting, and lack of pixels is more effective at minimizing the success rate of deepfakes than other solutions, including verifying IDs against selfies.

Gemini Advisory provides actionable fraud intelligence to the largest financial organizations in an effort to mitigate ever-growing cyber risks. Our proprietary software utilizes asymmetrical solutions in order to help identify and isolate assets targeted by fraudsters and online criminals in real-time.